Comparing Netflix Conductor's Architecture with Flowable's

EDIT: This blog post took so long that by the time I published I discover that just five days earlier, Netflix announced they "will discontinue maintenance of Conductor OSS on GitHub". Here's my post anyway....

I have spent several years developing a service (within a big organisation) which uses the workflow engine Flowable to orchestrate the complexity of calling many different services.Whilst the project was a success and we saw the benefits of using a

work flow engine, we did encounter a number of rather significant issues

with Flowable itself (I'll explain these on another post later).

This leads me to think that I would use a workflow engine again, but not necessarily Flowable. So what other workflow engines are out there? Netflix conductor is one which I plan on evaluating in this post.

First - Some background

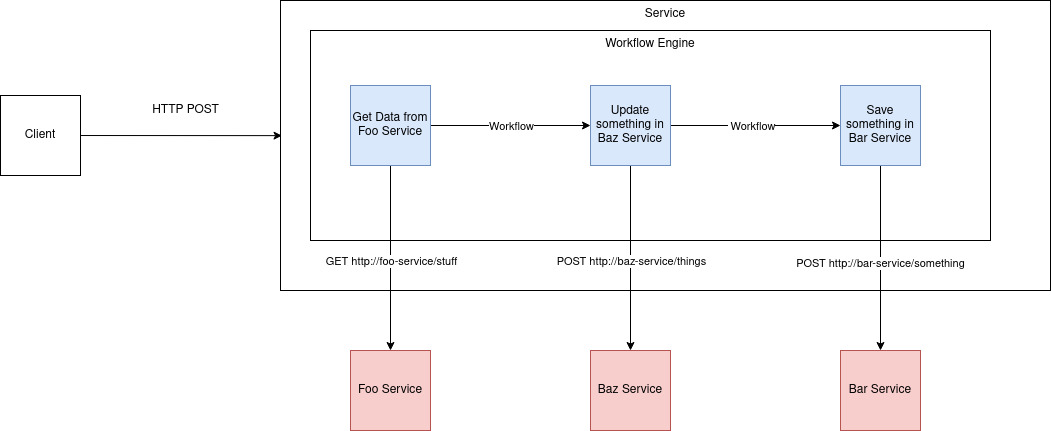

The service I worked on provided a RESTful API to its clients. On receiving a request it executed a workflow which called many other systems. From a high level, the steps are as follows:

- Client calls service (over HTTP)

- The incoming request is mapped to a workflow internally

- The workflow runs. Each task is just some JVM based code (Kotlin in our case) which called other services.

- Once the workflow completed the client was informed (either by a HTTP callback or event).

Diagrams created using diagrams.net

There were a number of good design aspects to this approach:

- Encapsulation - The internals of the workflow engine and the fact we were even using a workflow engine were hidden. Clients just sent POST requests to the service which were dealt with.

- The many dependent services (pictured in red) had no clue they were being called by a workflow engine - there was no difference to them - they just received HTTP requests from a client.

All of this meant that we could (in theory) replace our inner workings with a different workflow engine or abandon workflows all together and "just" replace it all with code instead. In practice our problems with Flowable were never that bad to justify the effort this would have taken.

Netflix Conductor

Disclaimer: I have not used Conductor in production - only for a home demo project.

At first I thought Netflix Conductor was a vast improvement on Flowable.

- You define workflows in simple YAML files which are easy to change manually and visualise in freely available tools. Flowable on the other hand uses very complicated XML files (it implements the mammoth BPMN spec) which are impossible to edit manually and hard using their UI.

- Workflows are coordinated on a central server (or servers) which all scale horizontally thanks to not relying on a central RDBMS. It has built in support for Redis and Cassandra (relation databases can be used if you prefer) and also uses elasticsearch for "indexing the execution of flows".

Conductor seems to encourage a different approach to the above architecture whereby the services performing the tasks (workers) are not called, they instead contact a central orchestration service and poll for work (tasks) to complete.

The diagram below was created based on my high level understanding of what talks to what.

- Clients invoke workflows on the conductor server. They can do this by using the Conductor client or sending http requests to the server. Either way the client needs to have knowledge of conductor and the workflow they want to invoke.

- The services (workers) poll the server for tasks to see if there are any to run. If there are, it runs them and replies with the result.

- The output is then passed to the next worker

Reasons I dislike this

All services involved in the workflow are coupled to Conductor

Many services will now depend on Netflix Conductor libraries. In the above diagram, workers one and two will have to depend on the conductor-client library to call the server for tasks. This is a small library to import but I'd still argue this means it's a big choice to choose Conductor as it will take some work in a lot of services (possibly other teams) if you decide it's the wrong idea. It's also worth pointing out that at time of writing, the latest version 3.15 imports a rather old version of jersey-client from 2017 which caused me some real headaches when creating my demo app!

Dependencies seem the wrong way around

How do you test this?

I spent a lot of time googling end to end tests for Netflix Conductor workflows. I couldn't find anyone who had done this and I begin to think it might be because it's too complex or perhaps argued that it falls into the category of end to end environment testing which is not advocated for by many people. Going back to our Flowable based system, the endpoints (which invoked workflows) could be tested with mocked dependencies relatively easily and give us a high degree of confidence that the workflows actually worked.

If I did use Conductor - I'd try and reduce the coupling to it...

- Client sends a POST request to a "Rest Facade" to start the workflow. The client speaks the language of the domain and isn't coupled to the concept of a workflow.

- The Rest Facade delegates to the Conductor Server.

- All workflow tasks are defined in a single service named here as the "Task Runner which polls the server using the standard Conductor Client

- Each worker invokes the different services via HTTP

The benefit of this implementation is:

- We have reduced the coupling across our stack on Conductor.

- We are able to test the workflow from end to end in a meaningful way. I'll write more about this in a later blog post.

The downsides to this:

- We have increased the complexity of our architecture. I count two extra components on the diagram that have to be kept running/maintained etc etc at all times!

- Each worker still has to poll the server looking for work. This means lots of communication overhead and delays in task execution that can only be reduced by decreasing the polling interval (and increasing communication overhead even further).

Can this be simplified

I believe so. After creating my sample app (as per the diagram above) I realised that we could simplify all this by building an app which imports the Conductor server and adds to it's functionality. Specifically we could add a rest facade and a set of custom tasks.

Benefits:

- No polling from workers looking for work (tasks).

- Less services on the diagram to keep running.

Downsides:

- You can no longer use the standard Conductor-Server docker image (you have to build this from source anyway) as you're now building your own.

- Versions of libraries in Conductor seem outdated - this approach may expose this issue further.

- Possibly this is an even more non-standard setup than recommended?

Conclusion

Netflix Conductor has many benefits over Flowable - however it seems the recommended path of adoption leads to Conductor coupling amongst all apps and a workflow that's impossible to test. I'd rather go for the approaches detailed above. If you have used Conductor - please drop a comment below - I'd love to hear your thoughts.

.drawio.png)

.drawio.png)

Comments

Post a Comment